How to write with AI

Or at least, how not to.

The art of letters, claimed these advocates of pen and pencil, would no longer be an art but a trade. Nimble fingers would mean nimble thoughts. Nimble thoughts would mean the end of careful weighing, careful choosing, and the careful setting forth of the results of that weighing and choosing…. Poetry written on a typewriter is apt to become hackneyed, stilted, machine-like. The fingers are slaves of habit and soon make their choice of phrases, with the result that originality is menaced.

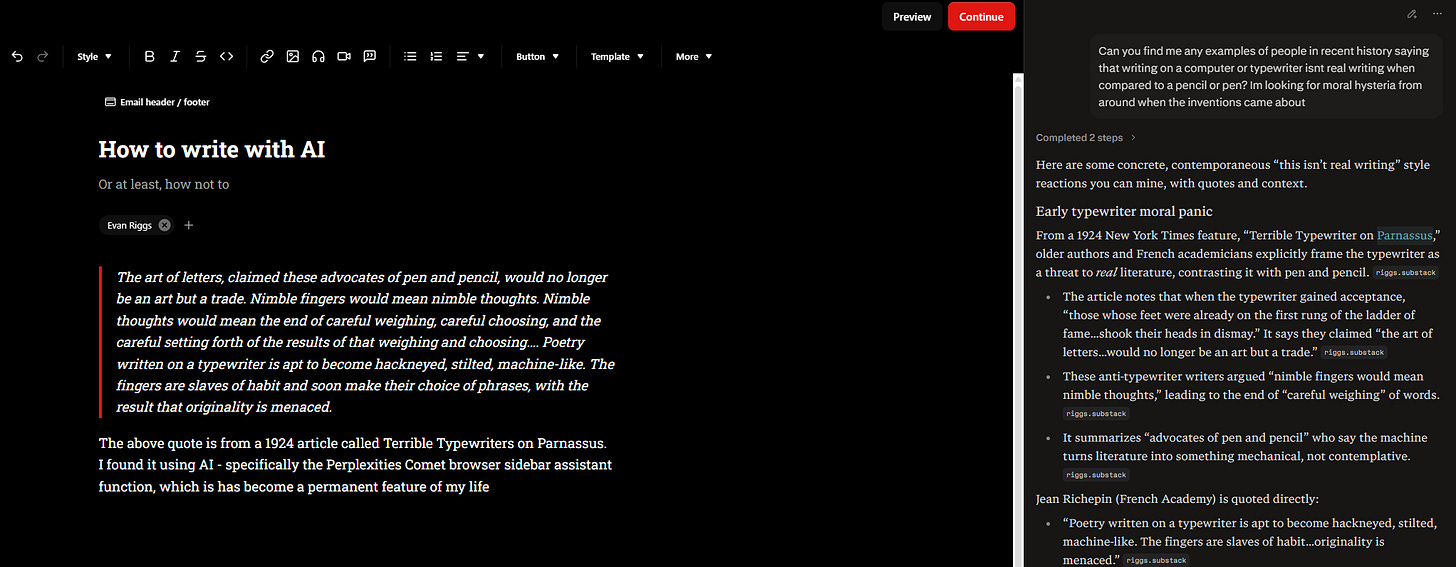

The above quote is from a 1924 article called Terrible Typewriters on Parnassus. I found it using AI - specifically, the Perplexity Comet browser sidebar assistant function, which has basically become a permanent feature of my life.

What a great example of exactly what I was looking for! I read the full thing immediately, and didn’t even see that I had taken the same quote to open this essay until I went to insert the above screencap!

But how could you ever trust me on that? I’ve already admitted to what many consider to be the new cardinal sin of writing, any form of AI use whatsoever. Or have I, because it’s no different from Googling at this stage, and the text I could have potentially copied over isn’t even AI-generated; it’s a quote from over 100 years ago.

Here’s the problem though.

A quick scroll down and we are already out of search territory. Now, it’s giving me guidance. Not just a tool, but a shortcut. Maybe now we’re moving away from an art to something closer to a trade — and a Faustian one at that. I don’t have to think, not carefully, not nimbly, not at all, and words can appear anyway. This could be just some guidance, or it could be taken further, a full outline, or even entire paragraphs copied directly in from the prompt response. Maybe that’s what I am doing right now, did you see the em-dash? Is there not a sentence that uses it’s not X, but Y??

Again, you’ll just have to trust that I did indeed write all that. And I did, except the em-dash, which I copied over to make a point. There always seems to be at least one of them floating around close at hand. And maybe, because of that, I’ve started using them a bit more, small symbols and ways of saying things slowly sliding into my subconscious. Having stared at so much slop it’s now slipping out of me. I am a little worried that I’ve read so much AI text by now, its own particular style will be, in whatever way, adapted into mine. Now it seems that the only way to assure readers that you actually wrote something is to be too differentiated, throw guidelines out the window, maybe insert some typos for good measure, or at least don’t correct them all when you run your drafts through Claude for a copy edit.

This is all to say that we still haven’t figured out how to calibrate appropriate AI use for writers since the debut of ChatGPT three years ago. Actually, it seems like we are actually getting worse in our understanding of what’s possible and what’s pathetic as the tools get better. What used to be just a chatbot can now produce deep research reports modeled after your own voice, having distilled some markdown file guidance from everything you’ve ever written. Agents trained to think like all your favorite authors and editors can pore over your drafts while you sleep, making suggestions, shaping your thoughts, while not exactly sharpening your thinking. Rather than jotting down notes while on a walk, you can send some AI off to do all of this, in sync, the second you have an idea. Rather than sitting with a thought for a while, a working draft could be delivered before you’re back home. If you’re like me, there’s nothing stopping you from doing that right now with the two dozen essay ideas you promised you’d get back to one day. You could basically use this paragraph as the prompt!

For those who make their living by hitting the right keys in the right order, reading all of that was probably a bit uncomfortable. With the tech bros building our future, their unease never seems to step into the realm of what all this means for writers; it’s more focused on the existential risk of sentient paperclips, sorting polycules, and other silly things. But in one of those ink-drowned places like Westminster — an ocean and a continent away from San Francisco, a place that prides itself on being where real writers can write — all sorts of bad behavior in AI use and in declaring what’s acceptable AI use is starting to creep in. Recently, a minor scandal has emerged over Matt Goodwin, now tagged with the unfortunate moniker of MattGPT, releasing a book that appears to have been written largely with the help of AI. There are hallucinated quotes, there are GPT artifacts in the references, there is the fact that this book has come out in a little over a year since his last, and there is the publisher itself, Northstar, whose services seem to be entirely focused on having AI, ghostwriters, and ghostwriters using AI do all the work for you.

The weirdest part of this, at least for me, is not the fact that AI was obviously used in writing some of this book, but that it was used so poorly. The incompetence in applying some artificial intelligence seems disproportionate to the intense reaction to Matt for using it — shouldn’t good use be seen as worse than easily dismissed slop? Again, it’s now very easy to use AI agents in such a way that it would be difficult to distinguish Matt’s voice from typical AI speech patterns — they could have easily been trained on the entire set of books he actually wrote himself — or have them quickly run through his list of references to remove any GPT-tells. Maybe it doesn’t help, as Mary Harrington has pointed out, that this book has apparently sold quite well. It really shouldn’t be a shock to anyone with any knowledge of political media that the eager audiences for the ever-frenzied culture war drumbeat couldn’t care less about how the show keeps going, so long as it simply does. But for peer competitors, those who are committed to writing things the right way, Matt’s attempt at cashing in does represent a threat, a boundary being crossed, a new potential behavior to be brutally curtailed.

To quote a recent Derek Thompson tweet in full

Writing is thinking, and people who outsource the full writing process to AI will find their screens full of words and their minds empty of thought.

But also: All writing involves and has always involved “outsourcing”—reaching outside of the writer’s mind to pull in pieces of the world, before and after the work of making words. Writers draw their ideas from other people, books, articles; after writing they often rely on outside copy editors, fact checkers, transcribers.

Some of this stuff is just going to be done by AI in the future, and the boundaries between “good behavior” and “bad behavior” will have some blurry lines, and we should be honest and open about the blur rather than declare everybody with an open Claude window a part of the slopclass.

Anybody who says AI transcription of long interviews obliterates the identity of a writer is being a little silly. But what about copy editing? Claude is a fast and decent copy editor, but it is inhuman to rely on it for that function? Is it moral to google “Econ papers on income transfers for child poverty” but immoral to write the same thing as an AI prompt? What about throwing 500 muddled words into ChatGPT and saying “does this make any sense? what do you think I’m trying to say here?” That’s going to be useful for some people.

At an aesthetic level, I don’t like copy-pasting AI paragraphs into articles and pressing publish. That feels like me cheating myself. It feels like de-skilling. But the idea that “using AI” is anathema to the identity of being a writer is, in a few years, going to sound an awful lot like claiming that “using a computer” is a violation of the craft of writing. (Which, haha, maybe it is and we should all just go back to Steinbeck and his pencils; but talk about ships that have sailed.)

Obviously, having ghostwriters use AI to throw together a rip off of the Strange Death of Europe is a bad move. But so is having ghostwriters to begin with! It’s not obvious to me why having another human intelligence handle all the work and then stamping your name on the cover is any better than just outsourcing all your thinking to a data center. And this happens all the time! Maybe the audiences don’t care, but the author should because they are sabotaging themselves when it comes to the next step of shipping some writing - the media tour. Now, writing a book is largely an excuse to go on podcasts, give speeches, and use these invitations to cash in on the newfound “authorly” credibility. This is where many writers actually make their money, if they make any at all. And you can’t show up to this leg of the journey with your mind empty of thought because now it’s live, you need to know your own work. What’s worse, the more people who give in to this path, the easier it will be for a similar sort of scorn to appear — any author who writes something controversial can now have their work denigrated as slop, even if no AI was used at all.

And yet, I see much more derision directed at those who are beginning to write alongside artificial intelligence than I have ever seen for authors using ghostwriters, or using unnamed editors who should really be considered co-authors given their contributions, or writing essays that are mostly just quotes with a couple of rephrased sentences thrown in between. While Thompson is right in that determining what the right type of writing will look like is going to take some time, I am more curious about why there is such vitriol against any form of AI use at all in certain sectors. The only explanation I can come back to is where we started, with the handwritten diatribes against the typewriter. Those most resistant to the idea that there can be any form of “good behavior” when using AI to write will be those who succeeded in writing before it was ever possible, and those who can’t help but romanticize a past that will never come back. And one of the greatest differences between past technological displacements is that the people precisely equipped to understand, diagnose, and demand someone do something, anything, about the situation are the writers themselves.

Writing, like AI, is both a technology (maybe the most powerful form of technology ever discovered) and the thing that advances technological progress forward. When Gutenberg found a way to mass-produce thought at scale, there were all sorts of unforeseen complications. Deliberate lies, unintentional half-truths, and endlessly debatable new ideas spread like wildfire and sent Europe into chaos. Scribes lost their jobs. And critics complained that a slew of low-quality books were breaking apart what had always been understood as “true” knowledge. And yet, in the end, Gutenberg created more opportunities for writers than ever before — almost all great literature is downstream from his press because, without distribution, it’s almost certain that not enough people would have ever had the chance to read Frankenstein or Paradise Lost or Fahrenheit 451 or any of the classics for them to be considered classics. Even those who decried the typewriter a century ago would certainly have been impressed with Bradbury churning out the first version of his masterpiece on a pay-by-the-hour typewriter in his local library in a little over a week. Did his nimble work hamstring the originality of his vision, or was it necessary to bring it to life? Could that same argument be used to let a million new writers bloom with pay-by-the-token tools? Maybe — but remember, the entire point is to fill your mind, not the page.

These are the questions all writers and readers will have to grapple with as we rush toward whatever’s next. My advice for anyone committed to figuring out how to fill both mind and page would be to set some personal ground rules and chain yourself to their mast, maybe even write them down. Personally, I refuse to publish anything that is copied and pasted from AI. I try to only use it for search when writing my drafts and save a more robust use for a copyedit at the end. I ask it not to provide any suggestions outside the grammatical. I’m willing to let it take more of a role in behind-the-scenes docs, all those necessary pitches, plans and proposals that go into intellectual work, but even then, everything should receive multiple revisions before being sent out. As for one of Thompson’s rhetorical questions, ‘What about throwing 500 muddled words into ChatGPT and saying, does this make any sense? what do you think I’m trying to say here?’ That’s going to be useful for some people.” I don’t think that will be useful for anyone, and I refuse to do it. Making yourself make sense is all the fun there is in writing; don’t deprive yourself of that.

I agree with this. If you let AI write for you, you lose the main USP (unique selling proposition). Which is your own voice. This is the main part of your writing which differentiates you from anyone else. Without it, you are no different to anyone else.